How does NERC pricing work?

As a new PI using NERC for the first time, am I entitled to any credits?

As a new PI using NERC for the first time, you might wonder if you get any credits. Yes, you'll receive up to $1000 for the first month only. But remember, this credit can not be used in the following months. Also, it does not apply to GPU resource usage.

NERC offers you a pay-as-you-go approach for pricing for our cloud infrastructure offerings (Tiers of Service), including Infrastructure-as-a-Service (IaaS) – Red Hat OpenStack and Platform-as-a-Service (PaaS) – Red Hat OpenShift. The exception is the Storage quotas on NERC Storage Tiers, where the cost is determined by your requested and approved allocation values to reserve storage from the total NESE storage pool. For NERC (OpenStack) Resource Allocations, storage quotas are specified by the "OpenStack Volume Quota (GiB)" and "OpenStack Swift Quota (GiB)" allocation attributes. Whereas for NERC-OCP (OpenShift) Resource Allocations, storage quotas are specified by the "OpenShift Request on NESE Storage Quota (GiB)" and "OpenShift Limit on Ephemeral Storage Quota (GiB)" allocation attributes. If you have common questions or need more information, refer to our Billing FAQs for comprehensive answers. NERC offers a flexible cost model where an institution (with a per-project breakdown) is billed solely for the duration of the specific services required. Access is based on project-approved resource quotas, eliminating runaway usage and charges. There are no obligations of long-term contracts or complicated licensing agreements. Each institution will enter a lightweight MOU with MGHPCC that defines the services and billing model.

Calculations

Service Units (SUs)

| Name | GPU | vCPU | RAM (GiB) | Current Price |

|---|---|---|---|---|

| H100 GPU | 1 | 124 | 360 | $4 |

| A100sxm4 GPU | 1 | 31 | 240 | $2.078 |

| A100 GPU | 1 | 24 | 74 | $1.803 |

| V100 GPU | 1 | 48 | 192 | $1.214 |

| K80 GPU | 1 | 6 | 28.5 | $0.463 |

| CPU | 0 | 1 | 4 | $0.013 |

Now Available on NERC: NVIDIA H100 GPUs

The cutting-edge NVIDIA H100 80GB GPUs are now available for use with:

🔹 NERC Red Hat OpenShift AI (RHOAI) via JupyterLab workbenches

🔹 NERC OpenShift - based Containers

To get started, read our latest announcement.

Breakdown

CPU/GPU SUs

Service Units (SUs) can only be purchased as a whole unit. We will charge for Pods (summed up by Project) and VMs on a per-hour basis for any portion of an hour they are used, and any VM "flavor"/Pod reservation is charged as a multiplier of the base SU for the maximum resource they reserve.

GPU SU Example:

-

A Project or VM with:

1 A100 GPU, 24 vCPUs, 95MiB RAM, 199.2hrs -

Will be charged:

1 A100 GPU SUs x 200hrs (199.2 rounded up) x $1.803$360.60

OpenStack CPU SU Example:

-

A Project or VM with:

3 vCPU, 20 GiB RAM, 720hrs (24hr x 30days) -

Will be charged:

5 CPU SUs due to the extra RAM (20GiB vs. 12GiB(3 x 4GiB)) x 720hrs x $0.013$46.80

Are VMs invoiced even when shut down?

Yes, VMs incur charges as long as they are utilizing resources. Proactively managing your VMs helps optimize usage and reduce unnecessary costs. To avoid being billed for unused resources (i.e., GPU, vCPU, RAM), you can release the underlying compute resources in one of the following two ways:

- By Shelving the VM:

Shelving temporarily shuts down the VM and releases all its compute resources (i.e., GPU, vCPU, RAM), while preserving the disk and metadata. This allows you to resume the VM later without needing to reconfigure it. It's a cost-effective (Recommended) option if you plan to use the VM again in the future.

- By Deleting the VM:

Deleting the VM permanently removes it along with all associated resources, including compute, storage, and network allocations. Choose this option if the VM is no longer needed, as it fully eliminates any future charges.

It is advisable to create a snapshot of your VM prior to deletion to ensure you have a backup of your data and configurations. We strongly recommend detaching any additional volumes from your instance before creating any snapshots.

Please note:

The storage cost is determined by your requested and approved allocation values for the storage quotas defined under "OpenStack Volume Quota (GiB)" and "OpenStack Swift Quota (GiB)" in your NERC (OpenStack) Resource Allocations.

Even if you have deleted all volumes, snapshots, and object storage buckets and objects in your OpenStack project, it's essential to adjust the approved storage values for your NERC (OpenStack) allocation to zero (0). Otherwise, charges will continue to apply based on the approved storage quota.

You can easily scale or reduce your current resource allocations within your project. Follow this guide to request changes using NERC's ColdFront interface.

For common questions or additional information, please refer to our Billing FAQs.

OpenShift CPU SU Example:

-

Project with 3 Pods with:

i.

1 vCPU, 3 GiB RAM, 720hrs (24hr*30days)ii.

0.1 vCPU, 8 GiB RAM, 720hrs (24hr*30days)iii.

2 vCPU, 4 GiB RAM, 720hrs (24hr*30days)

-

Project Will be charged:

RoundUP(Sum(1 CPU SUs due to first pod * 720hrs * $0.0132 CPU SUs due to extra RAM (8GiB vs 0.4GiB(0.1*4GiB)) * 720hrs * $0.0132 CPU SUs due to more CPU (2vCPU vs 1vCPU(4GiB/4)) * 720hrs * $0.013))=RoundUP(Sum(720(1+2+2)))*0.013$46.80

How to calculate cost for all running OpenShift pods?

If you prefer a function for the OpenShift pods here it is:

Project SU HR count = RoundUP(SUM(Pod1 SU hour count + Pod2 SU hr count + ...))

OpenShift Pods are summed up to the project level so that fractions of CPU/RAM that some pods use will not get overcharged. There will be a split between CPU and GPU pods, as GPU pods cannot currently share resources with CPU pods.

Storage

Storage is charged separately at a rate of $0.009 TiB/hr

OpenStack volumes remain provisioned until they are deleted. VM's reserve volumes, and you can also create extra volumes yourself. In OpenShift pods, storage is only provisioned while it is active, and in persistent volumes, storage remains provisioned until it is deleted.

Very Important: Requested/Approved Allocated Storage Quota and Cost

The Storage cost is determined by your requested and approved allocation values. Once approved, these Storage quotas will need to be reserved from the total NESE storage pool for both NERC (OpenStack) and NERC-OCP (OpenShift) resources. For NERC (OpenStack) Resource Allocations, storage quotas are specified by the "OpenStack Volume Quota (GiB)" and "OpenStack Swift Quota (GiB)" allocation attributes. Whereas for NERC-OCP (OpenShift) Resource Allocations, storage quotas are specified by the "OpenShift Request on Storage Quota (GiB)" and "OpenShift Limit on Ephemeral Storage Quota (GiB)" allocation attributes.

Even if you have deleted all volumes, snapshots, and object storage buckets and objects in your OpenStack and OpenShift projects. It is very essential to adjust the approved values for your NERC (OpenStack) and NERC-OCP (OpenShift) resource allocations to zero (0) otherwise you will still be incurring a charge for the approved storage as explained in Billing FAQs.

Keep in mind that you can easily scale and expand your current resource allocations within your project. Follow this guide on how to use NERC's ColdFront to reduce your Storage quotas for NERC (OpenStack) allocations and this guide for NERC-OCP (OpenShift) allocations.

Storage Example 1:

-

Volume or VM with:

500GiB for 699.2hrs -

Will be charged:

.5 Storage TiB SU (.5 TiB x 700hrs) x $0.009 TiB/hr$3.15

Storage Example 2:

-

Volume or VM with:

10TiB for 720hrs (24hr x 30days) -

Will be charged:

10 Storage TiB SU (10TiB x 720 hrs) x $0.009 TiB/hr$64.80

Storage includes all types of storage Object, Block, Ephemeral & Image.

High-Level Function

To provide a more practical way to calculate your usage, here is a function of how the calculation works for OpenShift and OpenStack.

-

OpenStack = (Resource (vCPU/RAM/GPU) assigned to VM flavor converted to number of equivalent SUs) * (time VM has been running), rounded up to a whole hour + Extra storage.

NERC's OpenStack Flavor List

You can find the most up-to-date information on the current NERC's OpenStack flavors with corresponding SUs by referring to this page.

-

OpenShift = (Resource (vCPU/RAM) requested by Pod converted to the number of SU) * (time Pod was running), summed up to project level rounded up to the whole hour.

Where Can I View My Current Usage Invoice?

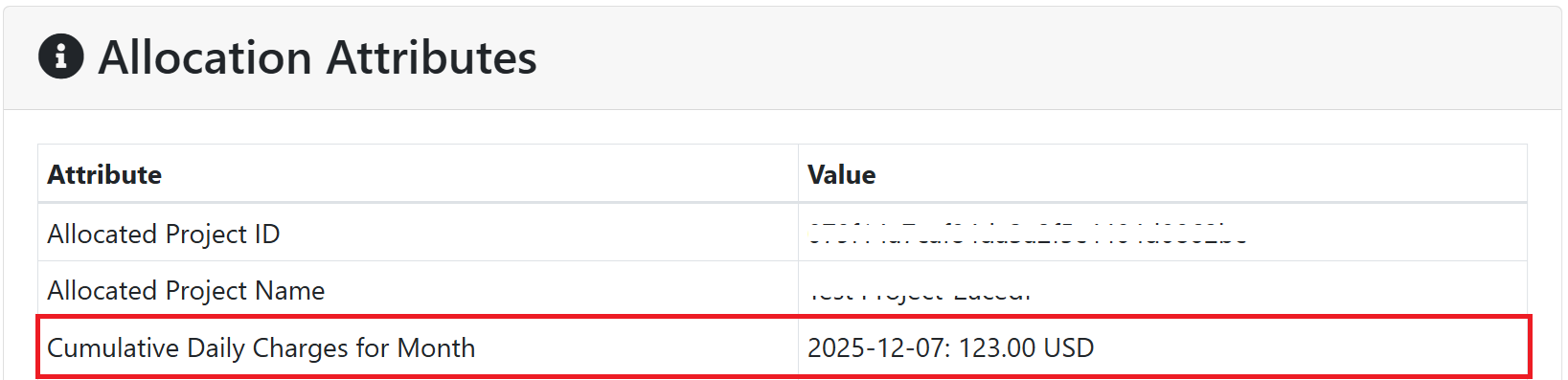

Using NERC's ColdFront interface, now makes it easier to keep track of your daily usage charges for each allocation. A new allocation attribute Cumulative Daily Charges for Month is available directly within the Allocation Detail page, giving you a transparent, day-by-day view of your monthly costs.

Steps:

-

Log in to the NERC ColdFront interface using the same institutional authentication method you used when registering with NERC via RegApp.

-

Navigate to Projects and open the project associated with your allocation.

-

Select the specific Allocation you want to review.

-

In the Allocation Detail page, look for the Allocation Attribute labeled:

Cumulative Daily Charges for Monthas shown below:

Understanding the "Cumulative Daily Charges for Month" Attribute Format

The Cumulative Daily Charges for Month allocation attribute stores daily running totals in a simple, date-indexed (UTC) format, along with the total cost in USD.

Each entry reflects the total accumulated billable charges for that month as of the end of that specific day. These values are refreshed nightly by a scheduled CronJob, ensuring that the cumulative totals remain up to date.

For example:

This indicates that as of December 7, 2025, the total billable usage for that allocation for the month has reached 123.00 USD.

Cumulative Daily Charges for Month

The value of this quota attribute is updated nightly to incorporate the latest available invoice data, ensuring daily cumulative totals remain current.

How to Use this Information

You can use the daily cumulative totals to:

-

Monitor your month-to-date spending with near real-time visibility.

-

Identify any unusual spikes or sudden changes in usage.

-

Plan workloads and manage your budget more effectively.

-

Communicate usage patterns and trends to your team or project members.

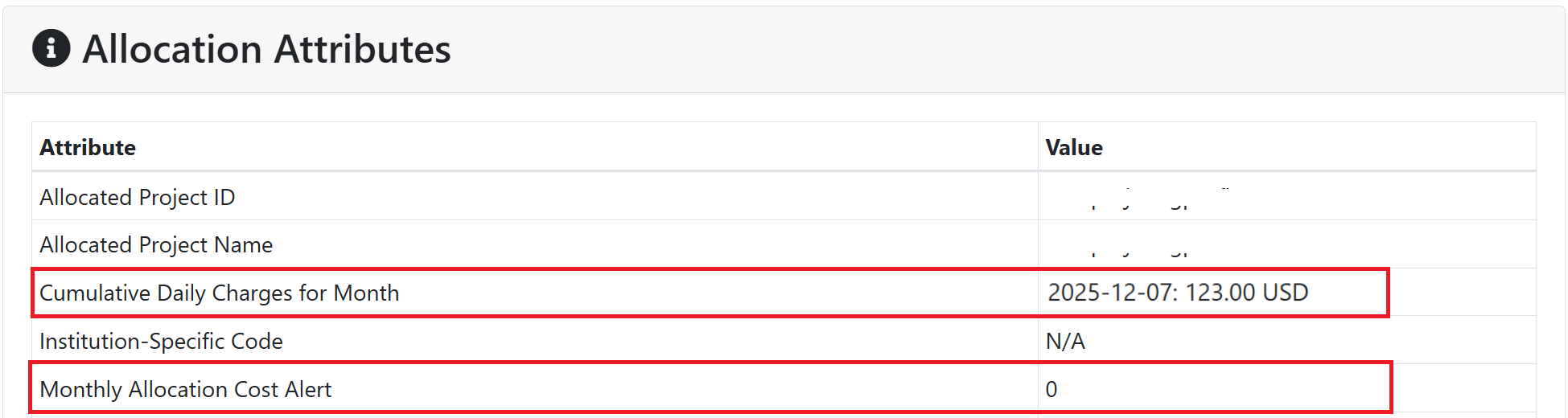

Monthly Allocation Alert Emails

In addition, a new Allocation Attribute labeled Monthly Allocation Cost Alert is now available to provide automated cost alert notifications for Project PIs and Manager(s), as shown below:

This attribute helps users monitor potential budget overruns by allowing them to set a threshold amount in USD. When the cumulative charges for the month exceed this value, email alerts are automatically sent to the Project PIs and Manager(s).

Important Note

By default, the Monthly Allocation Cost Alert allocation attribute is set to 0 for all existing allocations. In this state, no email notifications will be sent. To enable alerts, Project PIs or Manager(s) must set a preferred threshold value (in USD) for this attribute by submitting a Change Request.

If an allocation has the Monthly Allocation Cost Alert attribute configured, ColdFront will:

-

Monitor the total billable cost for the month to maintain better control over spending and ensure proper oversight.

-

Send alert email notifications to the Project PI and Manager(s) when the allocation's monthly charges exceed the configured threshold.

-

Ensure Project PIs and Manager(s) are promptly informed of over-usage through automated email alerts, eliminating the need to wait until the end of the monthly billing cycle to review charges.

How to Pay?

To ensure a comprehensive understanding of the billing process and payment options for NERC offerings, we advise PIs/Managers to visit individual pages designated for each institution. These pages provide detailed information specific to each organization's policies and procedures regarding their billing. By exploring these dedicated pages, you can gain insights into the preferred payment methods, invoicing cycles, breakdowns of cost components, and any available discounts or offers. Understanding the institution's unique approach to billing ensures accurate planning, effective financial management, and a transparent relationship with us.

If you have any some common questions or need further information, see our Billing FAQs for comprehensive answers.

SU Conservation - How to Save Cost?

With SUs being the primary metric for resource consumption, it's crucial to actively manage your workloads when they're not in use.

Below are practical ways to conserve SUs across different NERC services:

NERC OpenStack

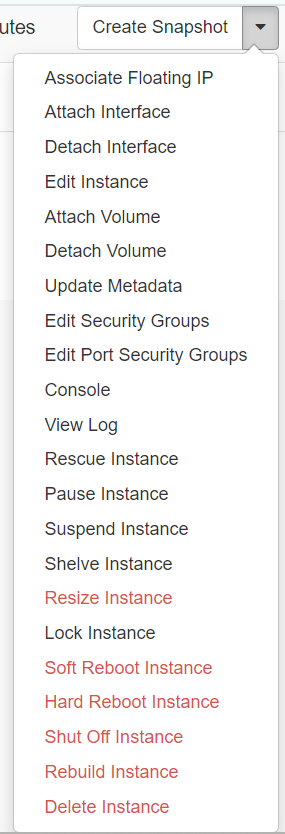

Once you're logged in to NERC's Horizon dashboard.

Navigate: Project -> Compute -> Instances.

After launching an instance (On the left side bar, click on Project -> Compute -> Instances), several options are available under the Actions menu located on the right hand side of your screen as shown here:

Shelve your VM when not in use:

Only Available From Next Billing Cycle

We will implement the invoicing piece of this feature as of the June 2025 Invoicing cycle.

In NERC OpenStack, if your VM does not need to run continuously, you can shelve it to free up consumed resources such as vCPUs, RAM, and disk. This action releases all allocated resources while preserving the VM's state and metadata.

-

Click Action -> Shelve Instance.

-

Releases all computing resources (i.e., GPU, vCPU, RAM), while preserving the disk and metadata.

-

We strongly recommend detaching volumes before shelving.

-

Status will change to

Shelved Offloaded.

You can later unshelve the VM without needing to recreate it - allowing you to reduce costs without losing any progress.

- To unshelve the instance, click Action -> Unshelve Instance.

For more details on shelving a VM, see the explanation here.

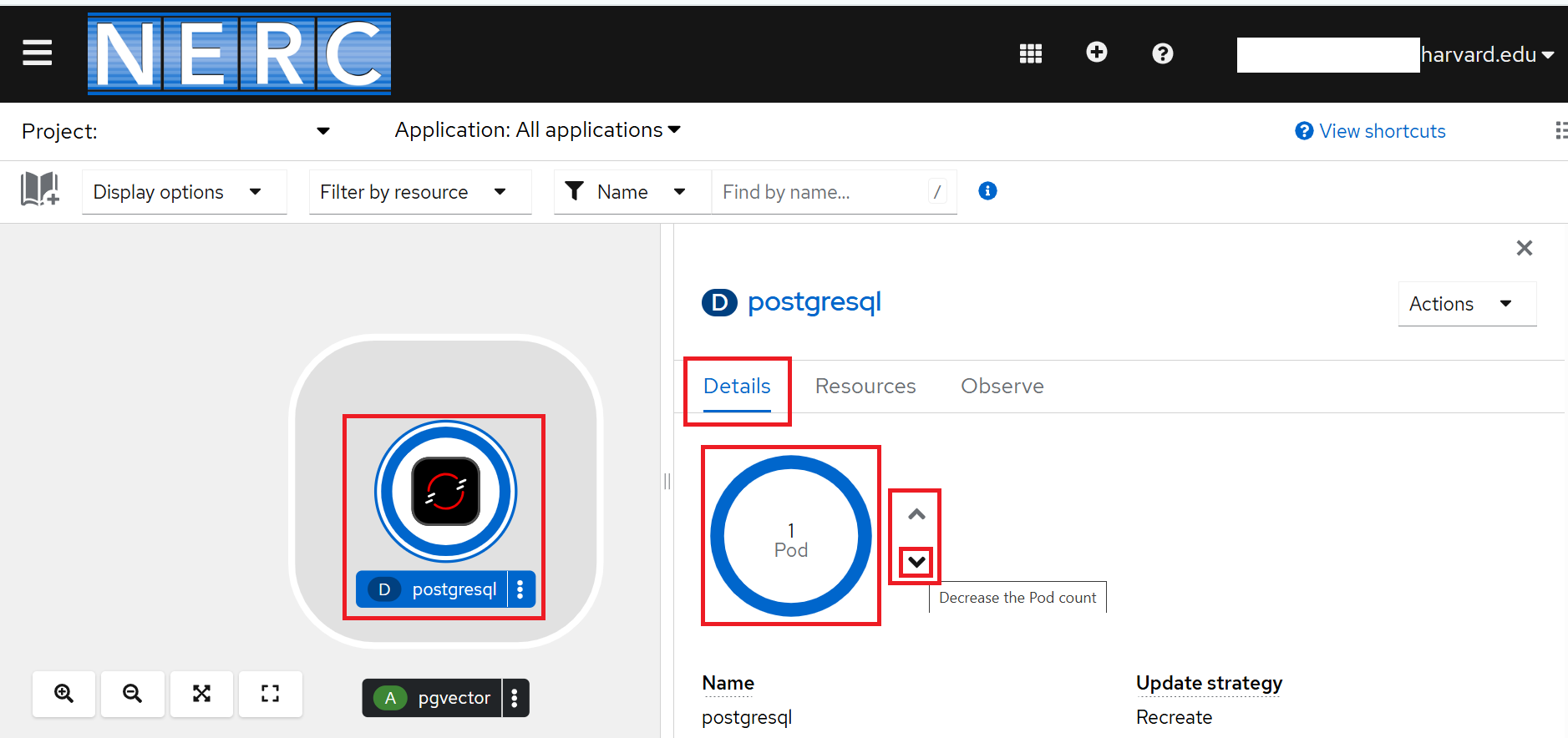

NERC OpenShift

Scale your pods to 0 replicas:

In NERC OpenShift, if your application or job is idle, you can scale its pod replica count to 0. This effectively frees up compute resources (i.e., GPU, vCPU, and RAM) while retaining the configuration, metadata, environment settings, and persistent volume claims (PVCs) for future use.

Using Web Console

-

Go to the NERC's OpenShift Web Console.

-

In the Navigation Menu, navigate to the Workloads -> Topology menu.

-

Click the pod or application you want to scale to see the Overview panel to the right.

-

In the Details tab (usually the default tab when you open the deployment):

-

Look for the Pod count or Replicas section.

-

Use the up/down arrows next to the number to adjust the replica count.

-

Set it to 0 by clicking down arrow as shown below:

-

OpenShift will automatically scale down the pods to 0.

When you need to run your application again, you can scale up the pod count or replicas to reclaim the necessary resources.

Using the OpenShift oc CLI

Prerequisite

-

Install and configure the OpenShift CLI (oc), see How to Setup the OpenShift CLI Tools for more information.

Information

Some users may have access to multiple projects. Run the following command to switch to a specific project space:

oc project <your-project-namespace>.Please confirm the correct project is being selected by running

oc project, as shown below:oc project Using project "<your_openshift_project_where_pod_deployed>" on server "https://api.shift.nerc.mghpcc.org:6443".

If your application or job is idle, you can scale your pod's replica count to 0 by running the following oc command:

When you need to run your application again, you can scale up the pod count or replicas to reclaim the necessary resources by running:

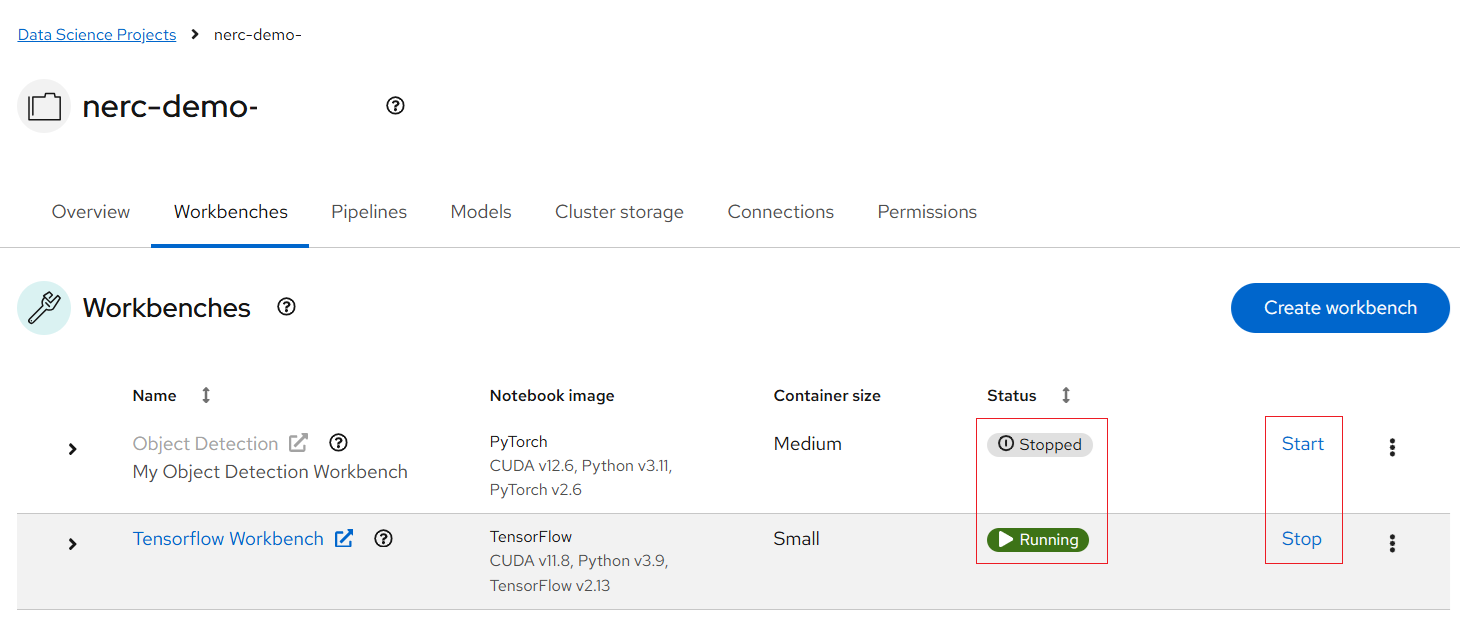

NERC RHOAI

Toggle the Workbench to "Stopped":

In NERC Red Hat OpenShift AI (RHOAI), workbench environments can be toggled between Running and Stopped states.

-

Go to the NERC's OpenShift Web Console.

-

After logging in to the NERC OpenShift console, access the NERC's Red Hat OpenShift AI dashboard by clicking the application launcher icon (the black-and-white icon that looks like a grid), located on the header.

-

When you've completed a workload such as model development or experimentation using the Data Science Project (DSP) Workbench, you can stop the compute resources used by the workbench by clicking

Stop, next to the Status column for the workbench. Then the status of the workbench change fromRunningtoStopped, as shown below:This action immediately releases the compute resources allocated to the notebook environment within the Workbench setup.

To restart your workbench, click

Startnext to the Status column for the workbench. The status will change from Stopped toStartingwhile the server initializes, and then toRunningonce the workbench has successfully started.